Social media may be about personal connections, but not every connection is valuable. I am reminded of this every time I post a technical piece to my Facebook account and a half-dozen old schoolmates and other “real life” friends respond with something like “I have no idea what this means, but I bet it’s really interesting to someone!” Their response demonstrates two things:

- Every one of us exists in multiple social circles, and sometimes we ourselves are the only overlap

- People who care about us will read what we write, but not every view is relevant to the topic at hand

This is a serious peril of many of the metrics we use to measure online success: Visitors and views are nice, but not every one is valid. It’s easy to fool ourselves and think that thousands of followers or friends means vast influence. But how many of these people really care what you have to say about a given topic? Very few.

Basic Metrics

Online metrics are incredibly simplistic. Consider the following baker’s dozen of familiar web metrics:

- Google Analytics – Anyone viewing a web site (and executing Google’s JavaScript code and not opting out) counts

- Visits – “The number of individual sessions initiated by all visitors to your site” within a 30-minute window. Re-visits by the same person after 30 minutes count as a new visit!

- Visitors – Identifiably-unique users visiting your site “during any given date range” are visitors. There is no standard time or date range, so one site might count visitors daily, while another might consider all-time visitors. Google Analytics reports default to one month.

- Pageview – A view of a page (that is being tracked) is reported as a page view. If a user reloads 100 times that’s 100 pageviews.

- Unique Pageview – “The number of (visits) during which a page was viewed one or more times” counts as a unique pageview. In other words, How often a page is viewed but with reloads and repeats by the same user during a 30-minute visitor removed.

- Time on site – How long a visit lasts is often considered a measure of site quality but can be misleading since many people leave many browser tabs open at once.

- Twitter – Although not used by everyone, Twitter has become critical to an active subset of Internet users

- Followers – Any Twitter user can “follow” any other, opting to include their updates in a stream they may (or may not) be watching

- Retweets – Although not invented by Twitter, having an update retweeted is one of the more-powerful forms of validation that someone is paying attention

- Clickthrough – Many people post links to their Twitter stream, and services like Bit.ly track the clicks on these links

- Facebook – Although more social than business-oriented, Facebook is rapidly becoming “the Internet” to a large number of people, just like AOL way back in the 1990’s.

- Friends – Unlike Twitter, Facebook requires a mutual opt-in to become “Friends”. Some people are promiscuous with friend requests, others are more selective. See also LinkedIn Contacts.

- Likes – Akin to a retweet, a Facebook Like means someone noticed an item and opted to highlight it.

- Comments – More serious than a Like, a comment requires more engagement and thought. The same holds true of comments on other services, including Buzz and blogs.

- RSS subscribers – RSS is used by less than 15% of blog readers, but is essential to them. Subscriber stats (as tracked by FeedBurner and similar services) are often trumpeted, though re-shares in Google Reader or Buzz or on Twitter are probably better indicators of readership. See also podcast subscribers.

- Email subscribers – Email remains a prime means of interacting online for many users, and email subscriptions often produce better long-term engagement and repeat business.

This list could go on and on, but one critical element is missing with most common metrics: Meaning. Does it matter if a blog post is “Dugg” 1,000 times? That’s certainly better than it being ignored. Does it matter if a YouTube view is viewed 1,000,000 times? That’s probably an indication it was infectious. But what result comes of it?

Who really cares?

Merely attracting attention is not enough – one must also engage with one’s audience in a meaningful way. Answering this core question of engagement is problematic at best. Who really cares about your message? How will you know if you have reached them?

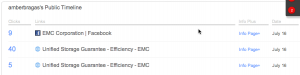

This question came up regarding EMC’s much-touted viral video campaign which included some questionable tactics to attract page and video views. A similar tempest is currently swirling around Silicon Angle’s claims that “200,000 viewers” watched their VMworld 2010 video stream. But these discussions too-often focus on the metrics instead of the core question: What metric should be used to demonstrate success?

Since both of these examples are in the enterprise IT space, let’s look at that niche in reverse. How many people influence IT buy-versus-don’t-buy decisions? Most companies include a large IT staff, but only certain people are able to influence product selection. Given the choice between EMC and NetApp, VMware and Citrix, Cisco and Juniper, or Dell and HP, the decision lies in the hands of tens of thousands of people worldwide, not millions. They may be influenced by a larger audience susceptible to branding and advertising, but buying power is concentrated in the hands of this small group.

Even the most interesting enterprise IT topics are truly relevant to just a few thousand individuals. A specialized blog or video could be considered a runaway success with just a few hundred views if every one was relevant and engaged, while millions of hits on another could result in little real impact. Let us consider Simon Long’s Gestalt IT post, Do I Upgrade to VMware Virtual Hardware Version 7? as an example. The fact that it has averaged 400 unique pageviews for months demonstrates that this topic is both relevant and vital to the VMware community. Each of these visits is worth thousands of casual clicks on a funny viral video! These visitors really care!

Better Metrics

It has always been frustratingly-difficult to measure marketing effectiveness. I was recently discussing the glossy-magazine age with a veteran IT marketer, and he was chuckling about their painful and imprecise metrics for advertising success. Circulation numbers were publicized and audited but who was to say how many readers were waiting on the other end? For every tattered and inspected issue of Byte there was another laying unread in a dumpster. Contests, special offers, and reader response cards were pretty much the best one could hope for in the printed age.

Web metrics could be so much better. Services like Crazy Egg can even build heat maps, demonstrating user interaction with a web page’s elements. We are drowning in data but most are unable to extract real value from it all. Google Analytics doesn’t help, offering up only marginally-useful statistics by default. But the real problem lies inside each of us.

Most marketers, pundits, and social media gadflies have only a passing understanding of web metrics and statistics, and only marginal interest in precision and disclosure. Many confuse ‘unique pageviews” for “visits” or use imprecise terms like “hits” and “views” when describing their success. We haven’t considered how we might measure effectiveness, let alone developed an ecosystem to measure and report it. And, unlike print publications, disclosure and auditing of circulation is rare.

Many Internet marketers are happy with the status quo since it lets them confuse the market and tout success that might prove dubious if examined more closely. Others don’t care either way: The shotgun approach is so cost-effective that missed targets have no real impact. But solid metrics do matter, especially when money is at stake. Advertisers and sponsors should not accept wild claims that stretch credibility, and publishers should not be enticed to stretch the truth. I would rather support a site with a few hundred quality, engaged visitors than millions of casual surfers. I imagine most would agree.

Image credit: Crate Expectations by Thristian